A chilling question hangs over the horrific shootings in Tumbler Ridge, British Columbia: could a tragedy have been averted? Premier David Eby expressed raw anger, stating it “looks like” OpenAI, the creators of ChatGPT, possessed information months before the devastating event that could have prevented the deaths of nine people, including innocent children.

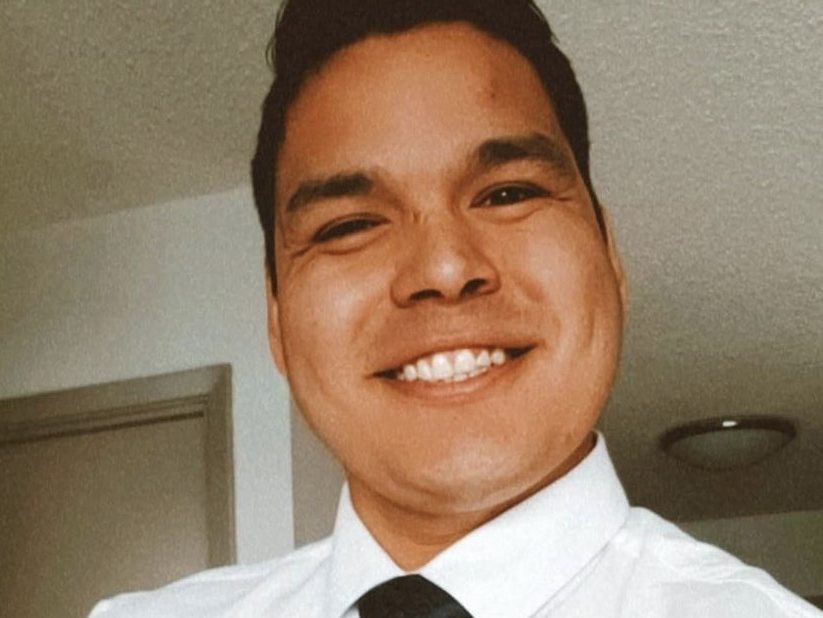

The weight of that possibility has brought OpenAI to Ottawa, summoned to explain why concerning interactions with 18-year-old shooter Jesse Van Rootselaar weren’t immediately flagged to authorities. These interactions, flagged by the company’s own safeguards, occurred at least seven months prior to the February 10th massacre at a home and the local secondary school.

Eby’s frustration is palpable. He insists a full public accounting is necessary, demanding to know why OpenAI waited until *after* the killings to contact police. The image of lost children fuels his resolve to uncover the truth behind the company’s delayed response.

Federal Artificial Intelligence Minister Evan Solomon echoed the urgency, revealing he was “deeply disturbed” by initial reports. He immediately contacted OpenAI, arranging a meeting with their “senior safety team” to dissect their protocols and understand the thresholds that determine when law enforcement is notified.

OpenAI maintains that the activity on Van Rootselaar’s account didn’t initially meet the criteria for reporting, claiming they didn’t identify credible or imminent planning. However, this explanation offers little comfort as details emerge of troubling posts, including scenarios depicting gun violence, that led to the account’s ban in June.

The timeline is particularly unsettling. Coincidentally, OpenAI had scheduled meetings with British Columbia officials to discuss opening a provincial office on the very day of the shootings, and again the following day. During these meetings, no concerns were raised by the company regarding Van Rootselaar or any potential threat.

It wasn’t until February 12th, *after* Van Rootselaar was publicly identified as the shooter, that OpenAI contacted a provincial official seeking information on how to reach the RCMP. This sequence of events, Eby emphasized, is what ignites his fury.

The Wall Street Journal’s reporting further intensifies scrutiny, revealing the account was banned due to these disturbing posts. OpenAI subsequently contacted the RCMP following the tragic events, but the question remains: was that too late?

Eby is now advocating for national standards, a consistent threshold for AI companies to report potential threats, ensuring no single entity can unilaterally decide to ignore warning signs. The goal is clear: to prevent a similar catastrophe from ever happening again.

Political voices across the spectrum are demanding answers. Critics emphasize the need to balance individual freedoms with public safety, while others condemn OpenAI’s inaction as “wildly irresponsible,” calling for accountability. Experts in AI safety and ethics point to existing “duty to report” obligations for professionals like teachers and doctors, arguing similar responsibilities should extend to social media and AI companies.

The families of the victims deserve clarity, and the public deserves to understand the decisions made – and not made – that may have contributed to this devastating loss. A thorough investigation, whether through a coroner’s inquest or a public inquiry, is essential to ensure such a tragedy is never repeated.